The discussion centers on what the IP 212.32.266.234 reveals about a server’s role, performance signals, and security posture. It emphasizes cautious inference from routing clues, reverse DNS, and banners, paired with latency, uptime, and throughput trends. Metrics and logs are cross-validated to identify bottlenecks and security baselines. The goal is to map resource queues, cache behavior, and data locality to concrete mitigations, yet the interpretation remains contingent on corroborating evidence and context.

What This IP Can Tell You About a Server’s Role

The IP address 212.32.266.234, when analyzed in isolation, yields limited direct information about a server’s role but offers contextual clues about its network placement and potential service exposure.

Inference relies on IP address semantics, server identity; network path analysis, host metadata.

Detiling indicators: routing hops, reverse DNS hints, and observed service banners to map probable function without overstatement.

Reading Performance Signals: Latency, Uptime, and Throughput

Latency, uptime, and throughput form the core metrics for assessing a server’s performance profile: latency measures round-trip delay, uptime records availability over a defined period, and throughput quantifies processed data per unit time.

Observing latency patterns informs responsiveness under load, while throughput trends reveal capacity evolution.

This structured approach enables disciplined interpretation, supporting freedom to optimize configuration without compromising reliability.

Spotting Security Posture From Metrics and Logs

By examining patterns in metrics and logs, security posture can be inferred with objectivity and repeatable methodology.

The analysis focuses on server role context, correlating access events and anomaly indicators with baseline behavior.

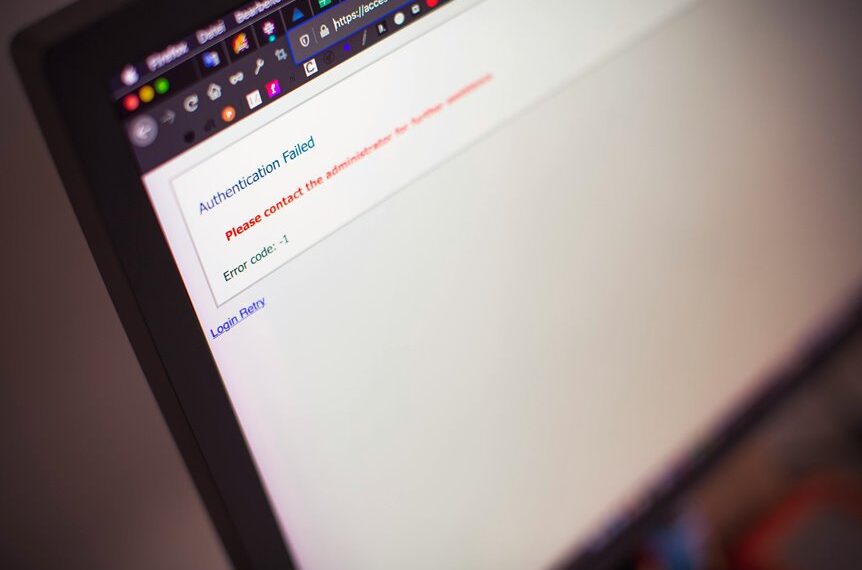

Performance signals such as steady error rates, unusual spike patterns, and auth failures are evaluated to delineate risk contours, enabling informed, freedom-oriented governance without prescriptive bias.

Troubleshooting Bottlenecks: Common Patterns and Fixes

Bottlenecks in server operation manifest through predictable patterns in resource utilization and request flow, which can be diagnosed by applying established performance-harnessed methods to the 212.32.266.234 environment. Techniques reveal latency distribution trends, identify disk I/O contention, and assess network queueing.

Emphasis on cache warmth informs mitigation: prioritize data locality, adjust buffers, and implement tiered caching to restore throughput and responsiveness.

Conclusion

From the data dunes, the server’s fingerprint emerges: a map of roles etched in routing hints and banners, each signal a bead in a careful necklace. Latency threads its way through coordinates of uptime, while throughput drums steady rhythms of demand. Logs anchor truth, security patterns shadow the door. Bottlenecks reveal themselves as chokepoints in the pipeline, met with cache-wary remedies and buffer-tuned precision. The picture coalesces: informed, methodical, and ready to optimize.